C# Testing Interview Questions and Answers (2026) – Unit Testing, Integration Testing, E2E, Performance Testing & Best Practices

This is Part 10 of our C#/.NET interview series, and it is entirely dedicated to testing — from the fundamentals to the hard problems that arise in distributed production systems. The questions are organized into nine sections that mirror how testing responsibilities grow as systems get more complex: core unit testing skills, integration test environments, HTTP and gRPC API testing, contract testing, distributed systems testing, resilience and fault injection, UI and E2E automation, and performance.

The Answers are split into sections: What 👼 Junior, 🎓 Middle, and 👑 Senior .NET engineers should know about a particular topic.

Also, please take a look at other articles in the series: C# / .NET Interview Questions and Answers

- Part 1: Core Language & Platform Fundamentals

- Part 2: Types and Type Features

- Part 3: Collections and Data Structures

- Part 4: Async & Parallel Programming

- Part 5: Design Patterns

- Part 6: ASP.NET Core

- Part 7: SQL Database

- Part 8: NoSQL Databases

- Part 9: Microservices and Distributed Systems

- Part 11: Desktop Development

- Part 12: Mobile Development

Testing Fundamentals

❓ What makes a test a “unit test” in C#, and what is usually mislabeled as unit?

A unit test checks a single unit of behavior in isolation. In C#, that usually means a single class or method, executed without touching the real infrastructure.

What makes a test a real unit test

- Tests one unit of logic, not a full flow.

- A unit test verifies a small unit of behavior in isolation. External infrastructure, such as databases, network calls, and file systems, should normally be replaced with test doubles.

- All dependencies are mocked, stubbed, or faked.

- Runs in milliseconds and is fully deterministic.

Typical unit test example in C#

public class PriceCalculator

{

public decimal Calculate(decimal price, decimal taxRate)

=> price + price * taxRate;

}

[Fact]

public void Calculate_adds_tax_correctly()

{

var calc = new PriceCalculator();

var result = calc.Calculate(100, 0.2m);

Assert.Equal(120, result);

}What is usually mislabeled as a unit test

- Tests that hit a real database, even SQLite or LocalDB.

- Tests using EF Core with a real provider.

- Tests calling real HTTP APIs.

- Tests that start ASP.NET Core with

WebApplicationFactory. - Tests that require Docker, configuration files, or network access.

These are integration or component tests. They are useful, but they are not unit tests.

What .NET engineers should know

- 👼 Junior: A unit test checks one class or method without real dependencies.

- 🎓 Middle: Know how to isolate logic using interfaces, mocks, and dependency injection.

- 👑 Senior: Design code so business logic is testable without infrastructure and enforce correct test boundaries.

📚 Resources

❓ What do you test: behavior, state, or interactions, and why?

Behavior testing focuses on observable results. You verify inputs and outputs regardless of the internal implementation. This approach keeps tests stable during refactoring and clearly expresses business rules.

State testing checks the internal state after an operation. It is useful when the state itself is the outcome, such as domain entities or aggregates with invariants. The downside is tighter coupling to implementation details.

Interaction testing verifies how dependencies are called. It is applied when side effects matter, for example, sending emails, publishing events, or coordinating workflows. Overuse makes tests brittle because they mirror implementation structure.

Summary:

- Prefer behavior testing by default.

- Use state testing when the state represents business meaning.

- Use interaction testing only for side effects and orchestration.

What .NET engineers should know

- 👼 Junior: Behavior tests outputs, state tests data, interaction tests calls.

- 🎓 Middle: Prefer behavior testing and avoid asserting implementation details.

- 👑 Senior: Balance all three styles and keep tests refactor-friendly.

❓ Dummy vs Mock vs Stub vs Fake vs Spy: what is the difference, and when to use each?

Dummy

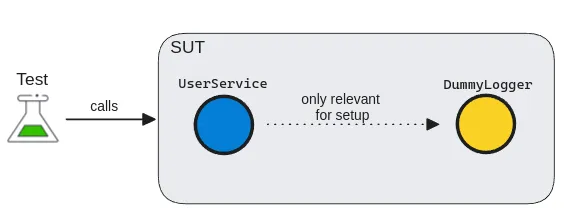

Dummies are used only for initialization and have no behavior. They act like placeholders, required to set up the system under test. With a dummy, we can convey that the object is not used directly in the test context.

Dummies have two main forms:

- Dummy values: used as simple value replacements in data fields

- Dummy objects: used for more complex data types and dependencies.

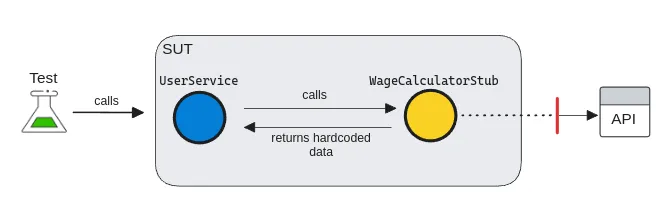

Stub

A stub is a slightly more sophisticated test double than a dummy, as it can return a value. They are used to simulate incoming interactions, such as returning hardcoded data to our SUT. A test stub is an implementation of an interface that provides a response to the system.

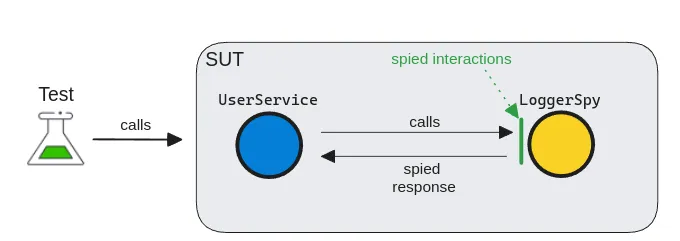

Spy

A test spy is almost like a real government spy, silently obtaining information. But instead of obtaining information from a competitor, a test spy gathers internal information from dependencies. A spy is a special kind of test double that can record and verify internal behaviors.

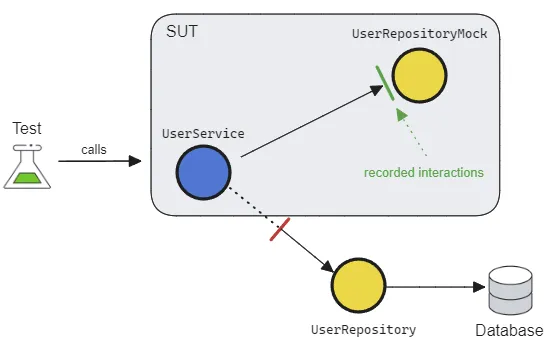

Mock

A Mock is a powerful beast, yet it is often the most misused. It can pre-record expectations and have configurable behaviors. Serving as a proxy for the dependency, it allows us to verify outgoing interactions, such as ensuring that a method is called with specific parameters.

Mocks can usually be created using 3rd-party libraries in most languages. Although they're flexible with many utilities, their syntax can be quite cryptic, making the tests less readable.

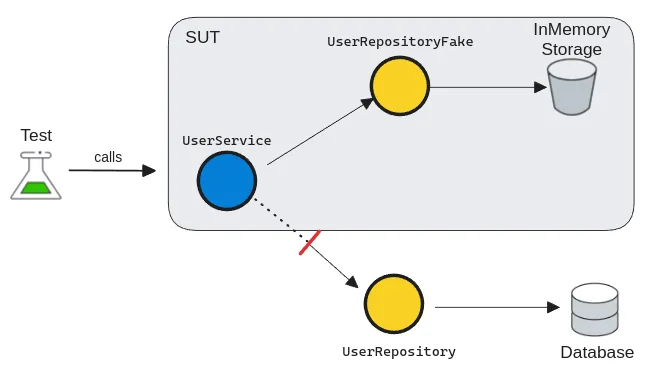

Fake

A fake is a simplified and lightweight implementation of a dependency. Seemingly, it behaves as a real dependency, but it just emulates business rules. Unlike mocks, they're used for verifying state rather than interactions. As they're the closest to real implementations, they're also the most powerful ones for simulating system behaviors.

What .NET engineers should know

- 👼 Junior: Know what each test double is used for and the basic differences.

- 🎓 Middle: Choose the simplest test double that solves the problem.

- 👑 Senior: Enforce clear test intent and prevent interaction-heavy test suites.

📚 Resources

❓ How do you avoid testing implementation details while still getting confidence?

To avoid testing implementation details, you must shift your focus from how the code works to what the code achieves. This is often called Behavior-Based or Outcome-Based testing.

1. Test the "Observable Behavior."

Only interact with the Public API of your class or module. If a piece of logic is private, don't change its access modifier just to test it. Instead, verify that the public method using that logic produces the correct output or state change.

- Bad: Asserting that a private

CalculateTax()method was called. - Good: Asserting that the

InvoiceTotalincludes the correct tax amount after callingFinalizeOrder().

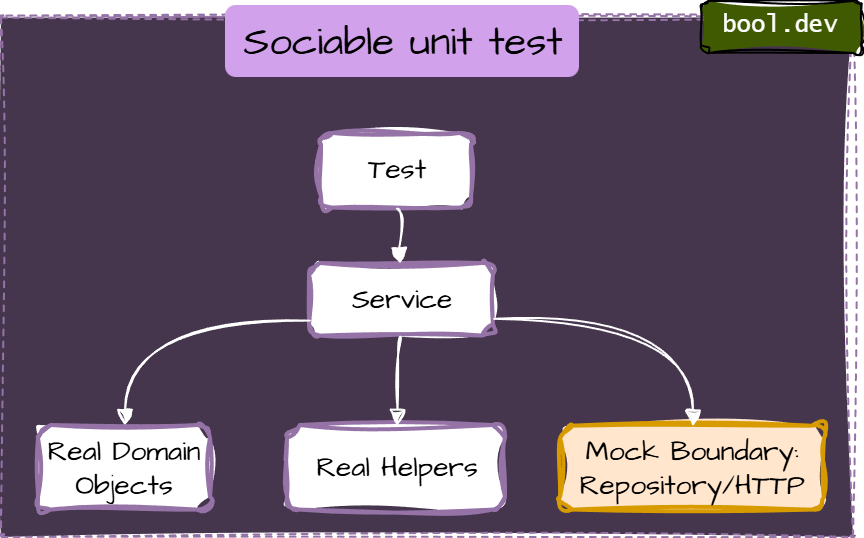

2. Move from Solitary to "Sociable" Tests

A "Solitary" test mocks every single dependency, which often forces you to mirror the internal code structure in your test setup. A Sociable Unit Test uses real versions of internal "helper" classes or domain objects, only mocking the true boundaries (Databases, External APIs).

3. Use State-Based Assertions

Whenever possible, check the final state (the "What") rather than the interaction (the "How").

Implementation Detail: mockRepo.Verify(r => r.Save(), Times.Once) — This breaks if you change your save logic to use a different method.

Behavior: Assert.Equal(expectedValue, database.GetValue()) — This ensures the data actually exists, regardless of which method saved it.

4. The Refactoring Litmus Test

The best way to know if you are testing the implementation is the Refactoring Test:

If I completely rewrite the internals of this method but keep the output exactly the same, do my tests stay green?

If they turn red because a mock setup failed or a private method disappeared, you are testing implementation details.

What .NET engineers should know

- 👼 Junior: Test outputs and visible effects, not private methods or internal calls.

- 🎓 Middle: Mock only external dependencies and keep assertions focused on behavior.

- 👑 Senior: Design boundaries so behavior testing is easy, and use a small set of integration tests for wiring confidence.

❓ What are the most common test smells in .NET codebases, and how do you fix them?

In .NET, "test smells," or antipatterns, are signs that your test suite is becoming a liability rather than an asset. Here are the most common ones and how to resolve them (detailed explanation you can check in a separate article):

Anti-Pattern 1: Testing Implementation Details

Smell

- Reflection to call private methods

InternalsVisibleToadded purely for tests- Asserting “private helper X was called.”

Fix

Test via public API

If private logic is complex, extract it into a separate class with a clear responsibility and test that class through its public surface.

Anti-Pattern 2: Over-Mocking (“Solitary Tests Everywhere”)

Smell

A test mocks every dependency—even simple domain objects and internal helpers—so the test setup mirrors the internal code structure. It's also calls solitary unit test

Fix: Prefer “Sociable” Unit Tests

- Use real implementations for internal collaborators (domain models, value objects, simple calculators). Mock only true boundaries:

- database repositories (if you’re unit-testing application logic)

- external HTTP APIs

- message buses

- filesystem

- clock/time and randomness

Anti-Pattern 3: Interaction-only assertions (mock.Verify everywhere)

Smell

Interaction assertions are sometimes useful at boundaries (“did we call the HTTP client?”), but they become brittle when used to verify internal steps.

Fix: assert outcome/state:

[Fact]

public void Finalize_SetsStatusToFinalized()

{

var order = new Order(subtotal: 100m, taxRate: 0.1m);

order.Finalize();

Assert.Equal(OrderStatus.Finalized, order.Status);

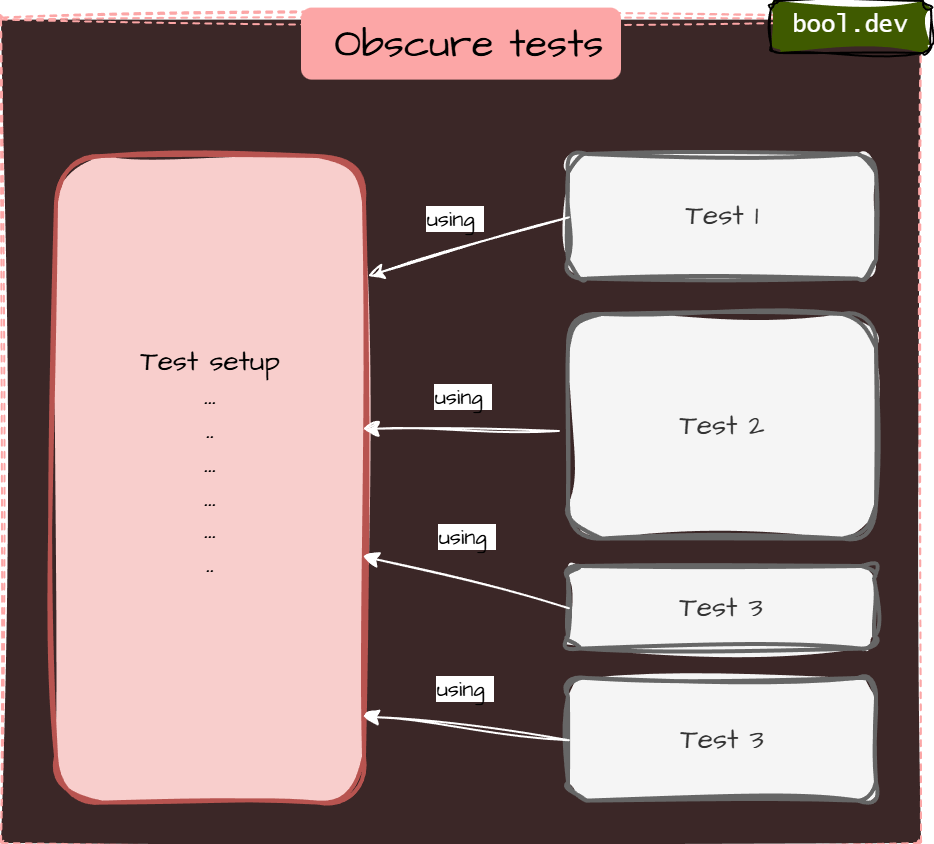

}Anti-Pattern 4: Obscure tests (giant setup)

Smell

- 100+ lines of setup

- The important inputs are buried

- copying setup across tests

Fix: AAA + Test Data Builder

public sealed class OrderBuilder

{

private decimal _subtotal = 100m;

private decimal _taxRate = 0.1m;

public OrderBuilder WithSubtotal(decimal value) { _subtotal = value; return this; }

public OrderBuilder WithTaxRate(decimal value) { _taxRate = value; return this; }

public Order Build() => new Order(_subtotal, _taxRate);

}

[Fact]

public void Finalize_ComputesTotal()

{

var order = new OrderBuilder()

.WithSubtotal(50m)

.WithTaxRate(0.05m)

.Build();

order.Finalize();

Assert.Equal(52.50m, order.Total);

}Anti-Pattern 5: Logic in tests (loops/if/switch)

Smell

Tests contain branching logic. Now you’ve written code inside your test that also needs testing

Example:

[Fact]

public void DiscountRules_Work()

{

foreach (var subtotal in new[] { 99m, 100m, 150m })

{

var order = new Order(subtotal, taxRate: 0m);

order.ApplyDiscounts();

if (subtotal >= 100m)

Assert.True(order.HasDiscount);

else

Assert.False(order.HasDiscount);

}

}Fix: parameterized tests

- Keep tests linear and explicit.

- Use parameterized tests instead of loops:

- xUnit:

[Theory]+[InlineData] - NUnit:

[TestCase]

- xUnit:

Example (xUnit)

[Theory]

[InlineData( 99, false)]

[InlineData(100, true)]

[InlineData(150, true)]

public void ApplyDiscounts_SetsHasDiscount_WhenThresholdMet(decimal subtotal, bool expected)

{

var order = new Order(subtotal, taxRate: 0m);

order.ApplyDiscounts();

Assert.Equal(expected, order.HasDiscount);

}Anti-Pattern 6: Sleepy/flaky async tests (Thread.Sleep)

Smell

Using fixed delays to “wait for work”.

Fix A: await the work (best)

If the API can expose a Task, do it:

[Fact]

public async Task SendsEmail()

{

var sut = new EmailDispatcher();

await sut.DispatchAsync("a@b.com", "hi");

Assert.True(sut.WasSent);

}Fix B: poll with timeout (when you can’t await directly)

private static async Task Eventually(Func<bool> condition, TimeSpan timeout)

{

var start = DateTime.UtcNow;

while (DateTime.UtcNow - start < timeout)

{

if (condition()) return;

await Task.Delay(20);

}

throw new TimeoutException("Condition was not met within the timeout.");

}

[Fact]

public async Task SendsEmail_Eventually()

{

var sut = new EmailDispatcher();

sut.Dispatch("a@b.com", "hi");

await Eventually(() => sut.WasSent, TimeSpan.FromSeconds(2));

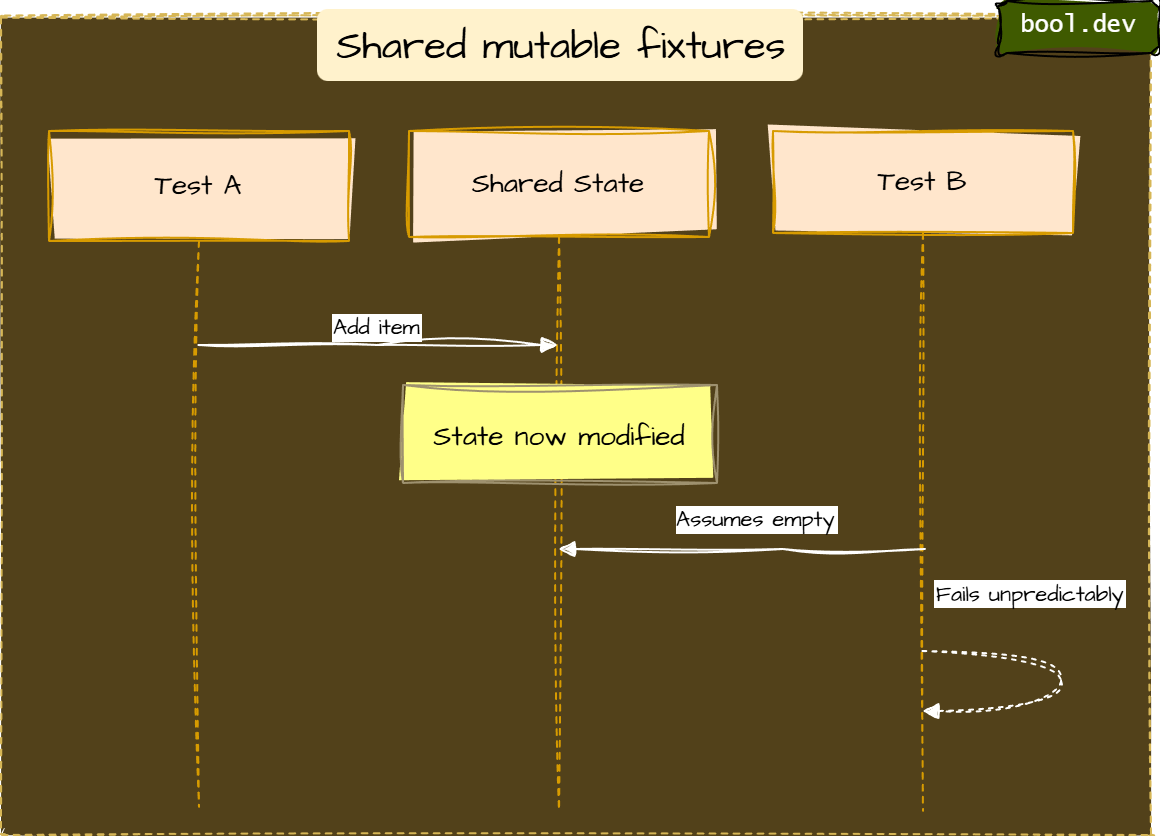

}Anti-pattern 7: Shared mutable fixtures

Smell

Static/shared state reused across tests:

- shared in-memory list

- shared DB without reset

- singleton services holding state

Fix

- Make each test independent (fresh instances per test)

- Avoid static mutable state

- If a DB is unavoidable, isolate:

- create schema per test run/class

- Run tests in a transaction and roll back

- Use containerized ephemeral DB in CI (integration layer)

Anti-Pattern 8: Non-Determinism (DateTime.Now, Random, Guid.NewGuid)

Smell

Tests depend on:

- current time (

DateTime.Now,DateTimeOffset.UtcNow) - time zones or culture settings (

CultureInfo.CurrentCulture) - randomness (

Random,Guid.NewGuid()) - machine-specific settings (environment variables, OS locale, file paths)

These tests pass on your machine but fail on CI, pass in January but fail in March, or pass 99 times and fail once — all without any code change.

Fix

- Fix A: Split by behavior

- Fix B: Use “assertion scopes” to improve failure signal

- Fix C: Prefer equivalence with explicit intent

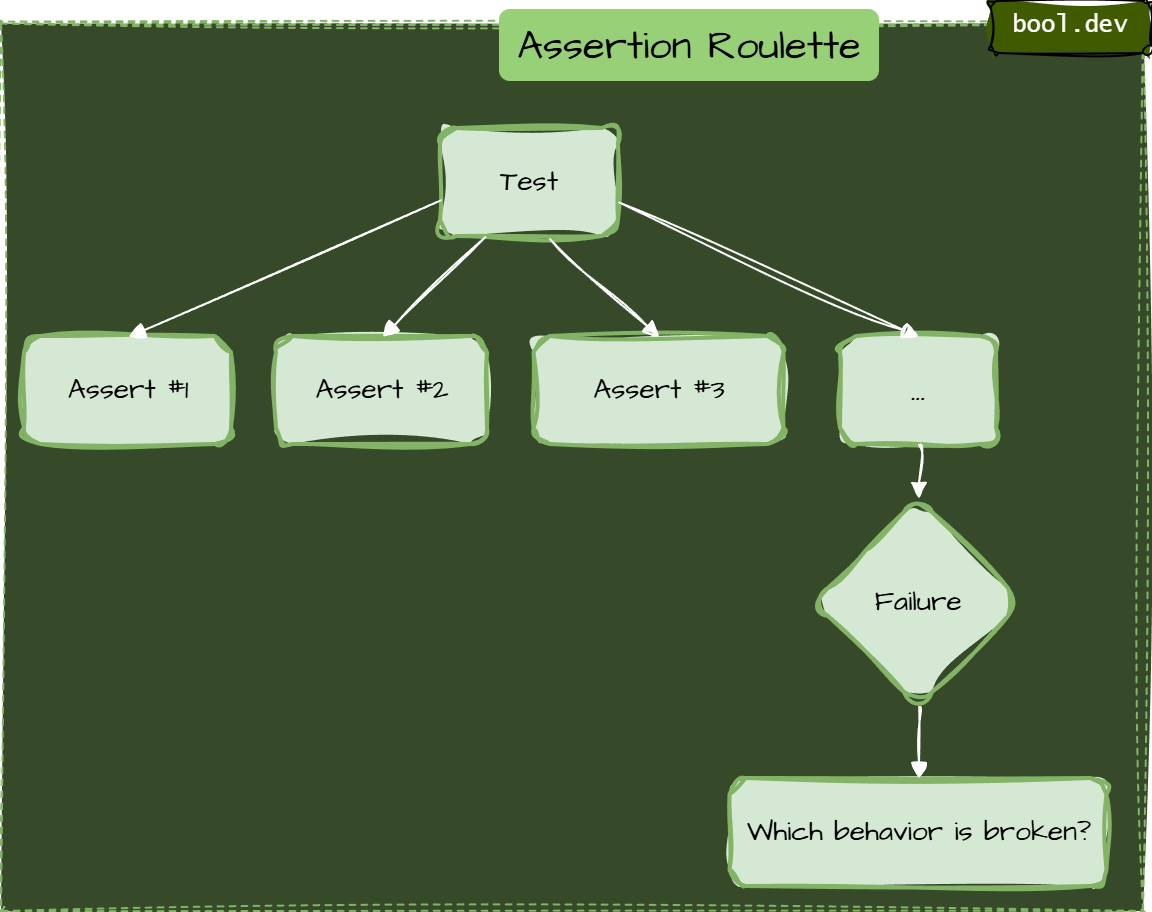

Anti-Pattern 9: Assertion Roulette (Many Asserts with No Clarity)

Smell

A single test contains a long list of assertions, and when it fails, you don’t immediately know which behavior broke.

Typical causes:

- testing multiple behaviors at once

- using plain

Assert.True(...)repeatedly without clear intent - validating an entire object graph in one go

Typical causes:

- testing multiple behaviors at once

- using plain

Assert.True(...)repeatedly without clear intent - validating an entire object graph in one go

Example:

[Fact]

public void Finalize_DoesEverything()

{

var order = CreateTestOrder();

order.Finalize();

Assert.True(order.IsFinalized);

Assert.Equal(110m, order.Total);

Assert.NotNull(order.FinalizedAt);

Assert.Equal("John", order.Customer.Name);

Assert.Equal(2, order.Items.Count);

Assert.True(order.Items.All(i => i.Price > 0));

Assert.Equal(OrderStatus.Finalized, order.Status);

}When this fails at line 5 with "Expected: 2, Actual: 3", you have no idea whether the bug is in item logic, finalization, or customer assignment. You also do not know if the other assertions would have passed or failed, because xUnit stops at the first failure.

Fix A:

Split by behavior. Write one test per meaningful rule

Fix B:

Use “assertion scopes” to improve the failure signal

Fix C:

Prefer equivalence with explicit intent

Anti-Pattern 10: Testing the Wrong Thing (Third-Party Libraries or Framework Internals)

Smell

You write unit tests that prove ASP.NET Core EF Core, Newtonsoft/System.Text.Json, or AutoMapper behave as documented — without asserting your business rule or configuration intent.

This is common when tests are created to “increase coverage” rather than to protect behavior.

Better: test your contract or mapping configuration (integration-ish)

If your API contract requires camelCase, test your endpoint (or your serialization settings) in a minimal integration test.

What .NET engineers should know

- 👼 Junior: Watch for slow and flaky tests first, then simplify setups and assertions.

- 🎓 Middle: Separate unit and integration tests, reduce over-mocking, and enforce deterministic tests.

- 👑 Senior: Treat test architecture as part of system design, enforce boundaries, and keep the suite fast and trustworthy.

📚 Resources: Top 10 Unit Testing Anti-Patterns in .NET and How to Avoid Them

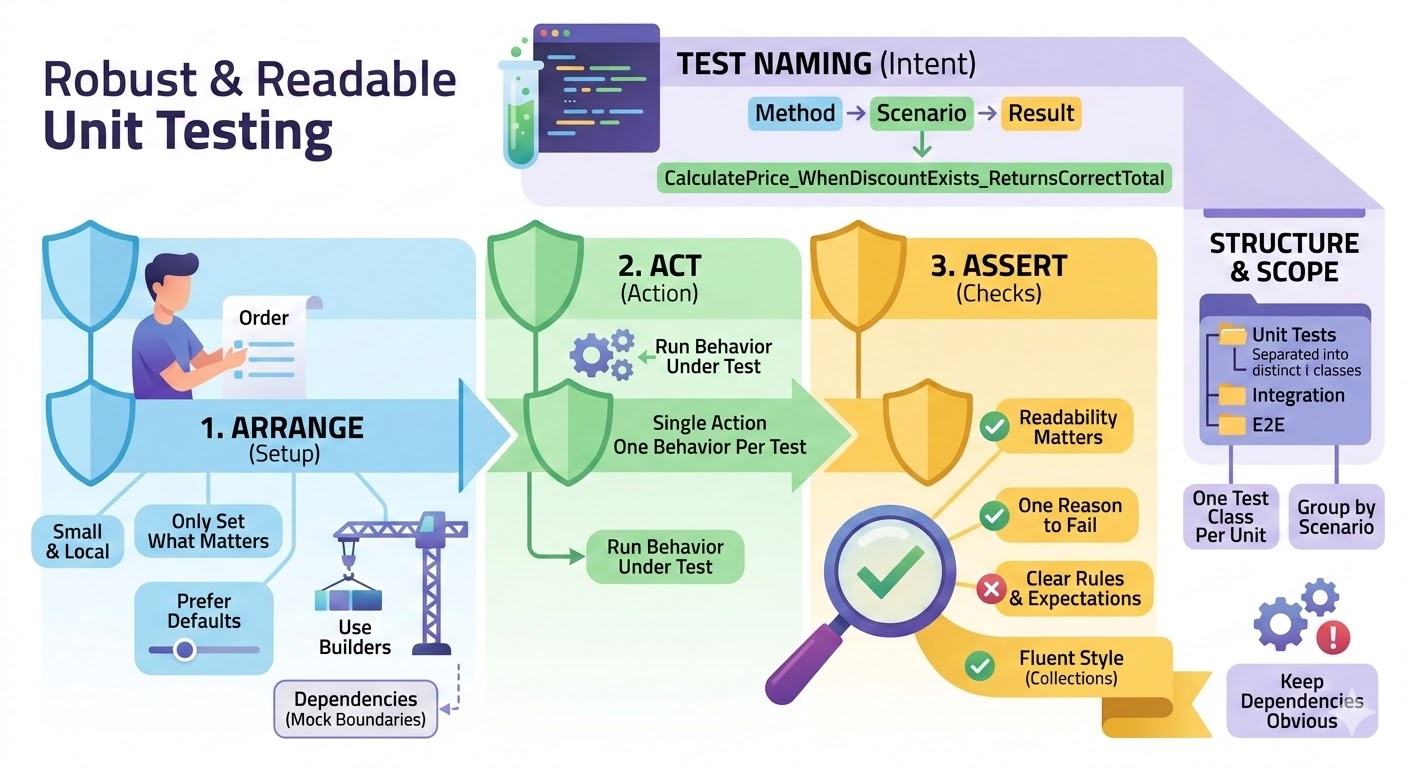

❓ How do you structure tests for readability?

Use a consistent shape

- Arrange, Act, Assert (AAA)

Keep it visually obvious where the setup ends, and the action starts, and where the checks are. - One reason to fail

One behavior per test. If it fails, it should be obvious which rule was broken.

Write names that explain intent

- Method_Scenario_ExpectedResult

Example:PlaceOrder_WhenStockIsZero_ReturnsOutOfStock

Keep the setup small and local

- Only set what matters for this test.

- Prefer defaults with overrides (builders) over huge object graphs.

Use helpers, but don’t hide the story

- Builders for data creation are good.

- Avoid helper methods that hide the actual behavior under test.

Make assertions readable

- Prefer a few clear assertions over many vague ones.

- Use FluentAssertions when it improves clarity, especially for collections and object graphs.

Group tests by unit and behavior

- One test class per unit (service/class).

- Group by method or scenario.

- Separate Unit, Integration, and E2E at the folder or project level.

Keep dependencies obvious

- Constructor setup is fine, but don’t build a full application in a unit test.

- Mock only boundaries and name mocks clearly.

What .NET engineers should know

- 👼 Junior: Use AAA and clear names so tests read like a short story.

- 🎓 Middle: Keep setup minimal with builders and assert one behavior per test.

- 👑 Senior: Standardize conventions across the repo and prevent hidden complexity in test helpers.

❓ How do you test cancellation and verify cancellation is actually honored?

- Make cancellation observable. The code under test should either throw OperationCanceledException, return a canceled Task, or stop producing side effects when the token is canceled.

- Cancel the drive from the test, then await completion. Cancel the token, then await the task. Do not rely on sleep. Use a gate (TaskCompletionSource) to ensure the operation actually started.

- Verify both parts

- The task is canceled.

- Work stops, meaning no extra calls, no extra writes, no extra events after cancellation.

C# example

Business logic:

public interface IEmailSender

{

Task SendAsync(string to, string subject, CancellationToken ct);

}

public sealed class NewsletterService

{

private readonly IEmailSender _emailSender;

public NewsletterService(IEmailSender emailSender) => _emailSender = emailSender;

public async Task SendBatchAsync(IEnumerable<string> recipients, CancellationToken ct)

{

foreach (var r in recipients)

{

ct.ThrowIfCancellationRequested();

await _emailSender.SendAsync(r, "Hello", ct);

}

}

}Deterministic test:

public sealed class FakeEmailSender : IEmailSender

{

private readonly TaskCompletionSource _firstCallStarted =

new(TaskCreationOptions.RunContinuationsAsynchronously);

private int _sent;

public int SentCount => _sent;

public Task FirstCallStarted => _firstCallStarted.Task;

public async Task SendAsync(string to, string subject, CancellationToken ct)

{

_firstCallStarted.TrySetResult();

// Cancellation-aware async point.

await Task.Yield();

ct.ThrowIfCancellationRequested();

Interlocked.Increment(ref _sent);

// Simulate real async work that honors cancellation.

await Task.Delay(TimeSpan.FromSeconds(30), ct);

}

}

public class NewsletterServiceTests

{

[Fact]

public async Task SendBatchAsync_honors_cancellation_and_stops_side_effects()

{

var sender = new FakeEmailSender();

var sut = new NewsletterService(sender);

using var cts = new CancellationTokenSource();

var recipients = new List<string> { "a@x", "b@x", "c@x" };

var task = sut.SendBatchAsync(recipients, cts.Token);

// Ensure the operation started, deterministically.

await sender.FirstCallStarted;

// Cancel and assert the task is canceled.

cts.Cancel();

await Assert.ThrowsAsync<OperationCanceledException>(async () => await task);

// Verify cancellation stopped the work (no full batch).

Assert.True(sender.SentCount < recipients.Count);

}

}What .NET engineers should know

- 👼 Junior: Cancel via CancellationTokenSource and assert OperationCanceledException or a canceled Task.

- 🎓 Middle: Use gates (TaskCompletionSource) to make cancellation tests deterministic and verify side effects stop.

- 👑 Senior: Design APIs to propagate cancellation everywhere and enforce cancellation behavior in code reviews.

📚 Resources

❓ How do you test time and scheduling deterministically (timeouts, delays, cron-like logic)?

Testing time-based logic is notoriously difficult because standard system clocks are non-deterministic. To test them reliably, you must decouple your code from the real-time clock.

Never use DateTime.Now, Task.Delay, or CancellationTokenSource(timeout) directly in your business logic. Instead, inject a Time Provider.

Since .NET 8, Microsoft has provided a built-in TimeProvider abstraction specifically for this purpose.

Example: Testing Delays and Timeouts

With a FakeTimeProvider, you can "teleport" time forward. The test executes instantly, but the code thinks minutes or hours have passed.

[Fact]

public async Task Should_Timeout_After_Five_Seconds()

{

// Arrange

var fakeTime = new FakeTimeProvider();

var sut = new OrderProcessor(fakeTime);

// Act

var task = sut.ProcessAsync(orderId);

// Fast-forward time manually

fakeTime.Advance(TimeSpan.FromSeconds(5.1));

// Assert

await Assert.ThrowsAsync<TimeoutException>(() => task);

}Example: Handling CancellationToken Timeouts

Standard CancellationTokenSource with a delay is hard to test. Instead, pass your TimeProvider when creating the token (available in .NET 8+).

// Logic code

using var cts = _timeProvider.CreateCancellationTokenSource(TimeSpan.FromMinutes(10));

// Test code

fakeTime.Advance(TimeSpan.FromMinutes(11));

Assert.True(cts.IsCancellationRequested);What .NET engineers should know

- 👼 Junior: Inject time instead of using DateTime.Now, avoid sleeping in tests.

- 🎓 Middle: Use TimeProvider and FakeTimeProvider, and keep scheduling logic pure and testable.

- 👑 Senior: Design background services so that time, timers, and scheduling are replaceable and tests can drive time deterministically.

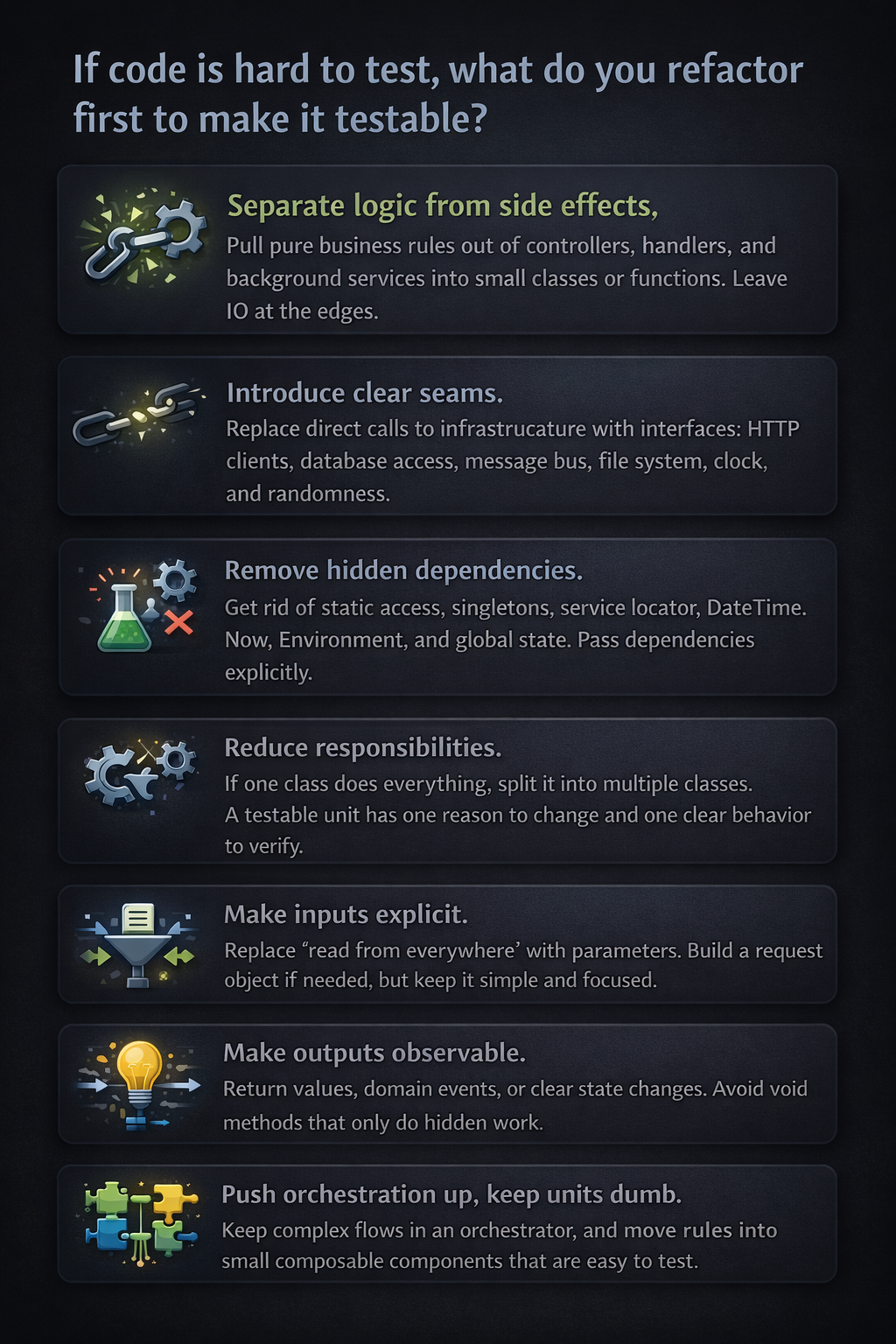

❓ If code is hard to test, what do you refactor first to make it testable?

- Separate logic from side effects. Pull pure business rules out of controllers, handlers, and background services into small classes or functions. Leave IO at the edges.

- Introduce clear seams. Replace direct calls to infrastructure with interfaces: HTTP clients, database access, message bus, file system, clock, and randomness.

- Remove hidden dependencies. Get rid of static access, singletons, service locator,

DateTime.Now, Environment, and global state. Pass dependencies explicitly. - Reduce responsibilities. If one class does everything, split it into multiple classes. A testable unit has one reason to change and one clear behavior to verify.

- Make inputs explicit. Replace “read from everywhere” with parameters. Build a request object if needed, but keep it simple and focused.

- Make outputs observable. Return values, domain events, or clear state changes. Avoid void methods that only do hidden work.

- Push orchestration up, keep units dumb. Keep complex flows in an orchestrator, and move rules into small composable components that are easy to test.

What .NET engineers should know

- 👼 Junior: Extract business logic into small methods and avoid static time and globals.

- 🎓 Middle: Introduce interfaces for external dependencies and split large classes into focused units.

- 👑 Senior: Design boundaries and architecture so most logic is testable without infrastructure.

📚 Resources

Integration testing and environments

❓ How do you test EF Core migrations and schema changes?

EF Core migrations are code that modifies production databases — they deserve the same test coverage as application logic. The failure modes are silent and expensive: a migration that runs without error but leaves the schema in a state the application cannot use, a rollback that does not fully restore the previous state, or a new migration that conflicts with data already in the database. None of these are caught by unit tests or by running the application against a fresh database.

The first test to write is migration idempotency — applying all pending migrations to a real database (Postgres, SQL Server via Testcontainers) and then verifying the resulting schema matches the EF Core model. context.Database.EnsureCreated() bypasses migrations entirely, so always use MigrateAsync() in tests. The IRelationalDatabaseCreator can then compare the applied schema against the current model to detect drift.

// Testcontainers — real SQL Server, real migrations

public class MigrationTests : IAsyncLifetime

{

private readonly MsSqlContainer _container = new MsSqlBuilder().Build();

public async Task InitializeAsync() => await _container.StartAsync();

public async Task DisposeAsync() => await _container.DisposeAsync();

[Fact]

public async Task Migrations_ApplyCleanly_AndMatchModel()

{

var options = new DbContextOptionsBuilder<AppDbContext>()

.UseSqlServer(_container.GetConnectionString())

.Options;

await using var context = new AppDbContext(options);

// Apply all migrations — never EnsureCreated in migration tests

await context.Database.MigrateAsync();

// Verify schema matches current EF model — catches drift

var creator = context.GetService<IRelationalDatabaseCreator>();

Assert.True(await creator.HasTablesAsync(),

"Schema missing tables after migration");

}

}What .NET engineers should know:

- 👼 Junior: Always test migrations against a real database via Testcontainers — never EnsureCreated, never an in-memory provider — because migration behavior depends on the actual database engine.

- 🎓 Middle: Test destructive migrations with pre-seeded data that mirrors production shape; assert that existing rows survive schema changes with correct defaults and that rollback restores the previous schema cleanly.

- 👑 Senior: Treat migration tests as a mandatory CI gate with three layers — clean apply against a real engine, destructive change with data fixtures, and schema drift detection that fails the build when entity changes have no corresponding migration; for large tables, test migration performance under realistic data volume since a migration that runs in 50ms on an empty database can lock a 50M row table for minutes.

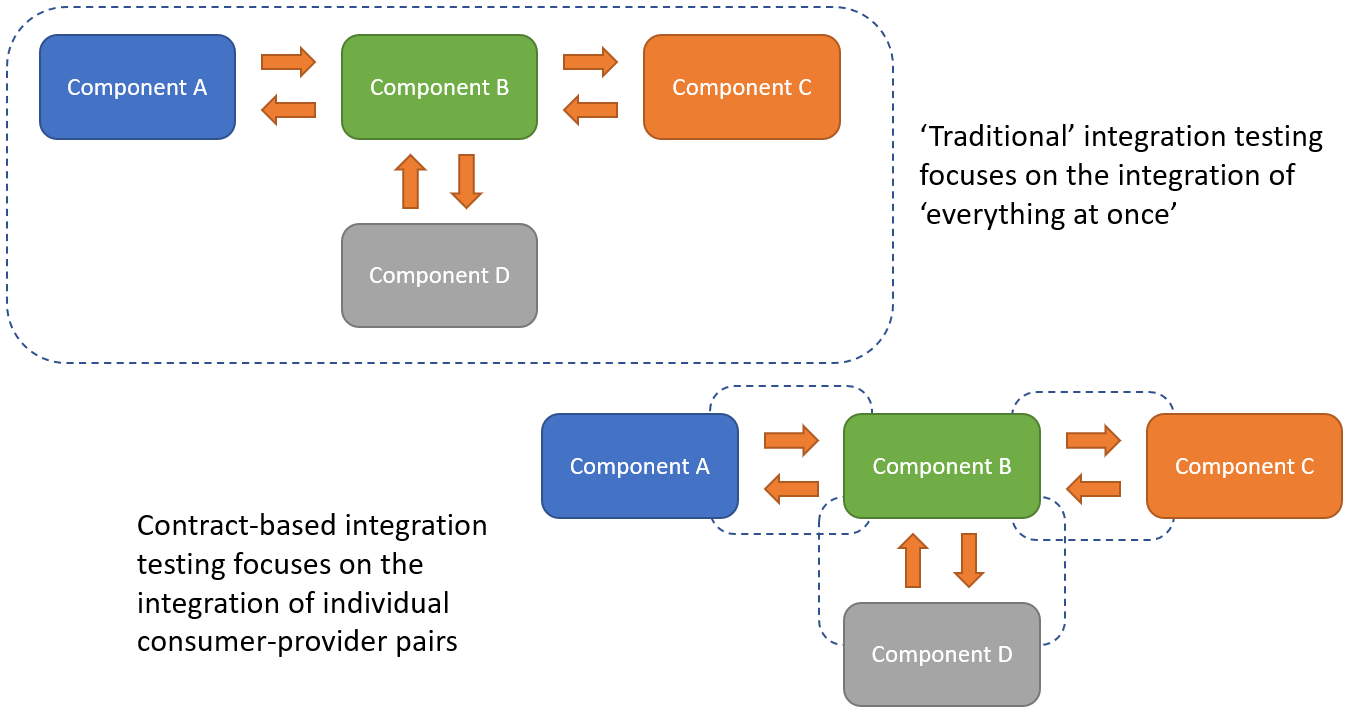

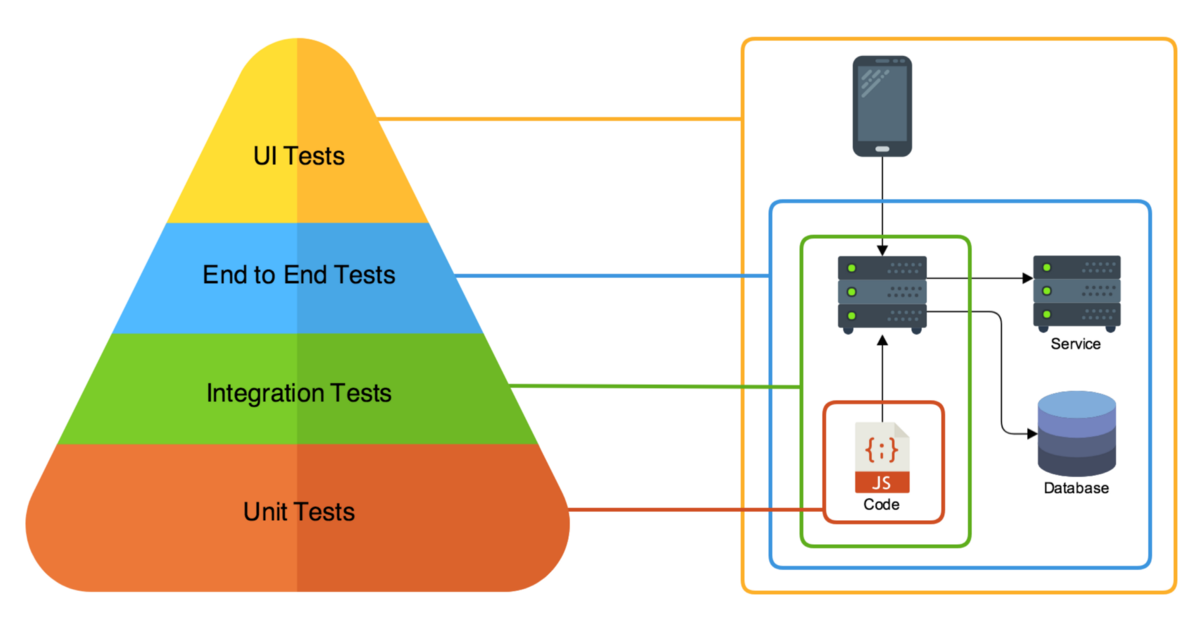

❓ What do you consider an integration test in .NET, and what is still unit-level?

In .NET, the line between unit and integration testing isn't about the tool (both use xUnit/NUnit) or the language, but about boundaries.

Unit-level tests

A test is unit-level when it verifies a single unit of logic in isolation, without real infrastructure.

Typical unit-level tests in .NET:

- Business logic classes with mocked or fake dependencies.

- Domain services, validators, calculators, parsers.

- Code using in-memory fakes instead of real DB, HTTP, or messaging.

- EF Core InMemory tests behave closer to component tests because they execute EF infrastructure but not a real relational database.

- ASP.NET controllers tested by calling actions directly, without hosting the app.

Key signal: If the test runs quickly, has no external dependencies, and fails only due to logic changes, it is a unit-level test.

Integration tests

A test is an integration test when it verifies how multiple components work together using real implementations.

Typical integration tests in .NET:

- EF Core with a real provider (SQL Server, PostgreSQL, SQLite).

- ASP.NET Core hosted with WebApplicationFactory.

- Real HTTP clients calling in-process APIs.

- Serialization and deserialization using real JSON options.

- Dependency Injection container wiring.

- Message handlers tested against real queues or brokers (often via Testcontainers).

Key signal: If the test validates wiring, configuration, or infrastructure behavior, it is an integration test.

Common confusion points

- EF Core InMemory provider. Still unit-level. It does not behave like a real relational database.

- WebApplicationFactory with mocked DB. Integration tests of HTTP pipeline, routing, filters, and middleware, even if the DB is mocked.

- Repository tests with real DB. Integration tests, not unit tests.

- “Fast integration tests” are still integration tests. Speed does not change the category.

What .NET engineers should know

- 👼 Junior: Unit tests isolate logic, integration tests use real implementations.

- 🎓 Middle: Know which dependencies turn a test into integration and structure projects accordingly.

- 👑 Senior: Design test strategy with clear boundaries and balance unit and integration coverage.

❓ What do you run “real” in integration tests (DB, cache, queue), and what do you fake?

Run real components where behavior, configuration, or contracts matter, and mocks would lie.

Typically, real in .NET integration tests:

- Database. Real DB engine and provider. This catches migrations, indexes, transactions, isolation levels, and SQL behavior that would otherwise be missed.

- ORM and mappings. EF Core with a real provider to validate LINQ translation, value converters, owned types, and constraints.

- Serialization. Real JSON or Protobuf serializers with production options. This prevents contract drift.

- Dependency Injection and configuration. Real DI container and app configuration to catch missing registrations and lifetime issues.

- HTTP pipeline. Real ASP.NET Core hosting with routing, middleware, filters, and auth handlers (often stubbed auth).

- Message contracts. Real message serialization and handlers, even if the broker is replaced.

Typically faked in integration tests:

You should fake anything that is outside your control, has a usage cost, or is non-deterministic.

- External third-party APIs

- Use HTTP stubs or in-memory test servers. Do not depend on the internet or partner systems.

- Email, SMS, push notifications

- Replace with fakes that capture intent, not delivery.

- Time and randomness

- Inject TimeProvider and deterministic generators.

- Authentication and identity providers

- Fake or stub auth to focus on your app’s behavior, not OAuth correctness.

Queues and caches: case by case

- Cache (Redis). Real when eviction, TTL, or serialization matters. Fake when cache is just a performance optimization.

- Message queues. Real when ordering, retries, and at least once delivery matter. Fake when testing handler logic only.

- Background workers. Real execution for integration tests, but controlled lifecycle and short runs.

What .NET engineers should know

- 👼 Junior: Real DB and serialization, fake external services.

- 🎓 Middle: Choose real components where behavior affects correctness, fake the rest.

- 👑 Senior: Define system boundaries and standardize what runs in real integration tests.

📚 Resources

❓ Why is an in-memory DB often a bad choice compared to a real database for integration testing?

While the EF Core In-Memory provider or SQLite In-Memory are tempting because they are fast and require zero setup, they often create a "false sense of security." They are not true databases; they are collections of objects in memory that happen to share a similar API.

Here is why they often fail as a substitute for a real database in integration tests:

- No real SQL translation. EF Core InMemory does not execute SQL. It evaluates queries in memory, so you do not catch LINQ translation issues that a real provider would fail on.

- Different relational behavior. Constraints, indexes, foreign keys, unique rules, cascade deletes, transactions, and isolation levels are not equivalent.

- Different null and comparison semantics. Case sensitivity, collation, string comparisons, datetime handling, and ordering can differ from SQL Server or PostgreSQL.

- Different concurrency behavior. Real DB locking, deadlocks, optimistic concurrency tokens, and transaction boundaries are not represented correctly.

- False performance assumptions. In-memory tests hide slow queries, missing indexes, N+1 patterns, and bad execution plans.

The Modern Alternative: Testcontainers

Instead of compromising with an in-memory fake, use Testcontainers for .NET. It allows you to spin up a real Docker container of your production database (SQL Server, PostgreSQL, etc.) specifically for the duration of the test.

What .NET engineers should know

- 👼 Junior: In-memory DB can pass tests while real DB fails due to different behavior.

- 🎓 Middle: Use real providers for EF Core integration tests to validate SQL translation and constraints.

- 👑 Senior: Standardize on real databases for integration testing and keep in-memory only for unit-level speed.

❓ How do you seed data for integration tests without making tests slow and coupled?

Seeding data is the "Goldilocks" problem of integration testing: too little data and you aren't testing real scenarios; too much data and your tests become slow and "brittle" (where changing one test breaks ten others).

To keep tests fast and decoupled, you should move away from a single "Global Seed" toward Localized, Scenario-Based Seeding.

1. Avoid the "Global Seed" Trap

Many developers use a single script to populate thousands of rows across the entire test suite.

Problem: If Test A needs "User 1" to be an Admin, but Test B needs "User 1" to be a Guest, you have a conflict. This leads to "Mystery Guest" bugs, where tests fail because of data they didn't create.

Fix: Start with an empty (or minimally static) database and let each test "Arrange" its own specific data.

2. Use "Object Mothers" or "Builders" for Data

Instead of raw SQL scripts, use C# classes to generate your entities. This ensures that if you add a required column to your database, you only have to update your Builder class once, rather than 50 SQL scripts.

// Example using a Builder

var order = new OrderBuilder()

.WithItems(3)

.ForCustomer(existingCustomer)

.Build();

await dbContext.Orders.AddAsync(order);

await dbContext.SaveChangesAsync();To keep tests fast and decoupled, you should move away from a single "Global Seed" toward Localized, Scenario-Based Seeding.

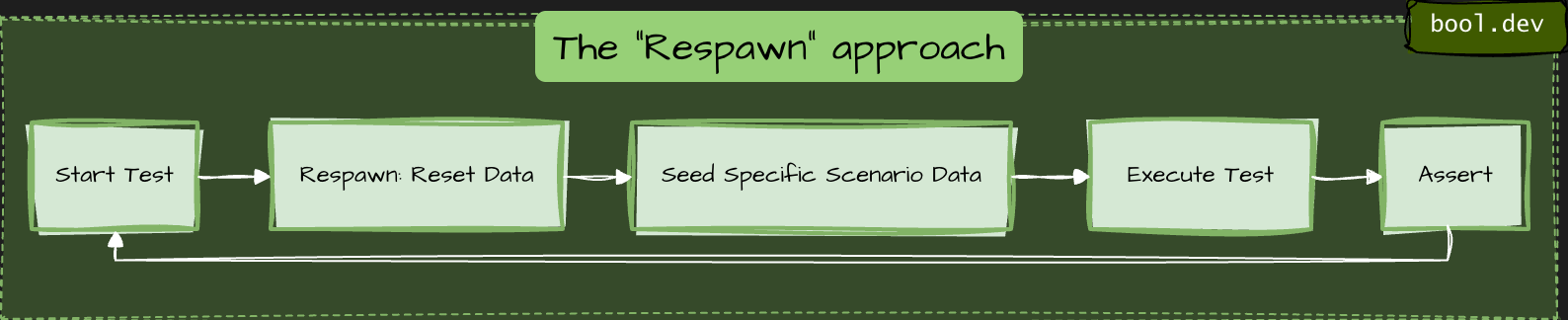

3. Strategy: The "Respawn" approach

To keep tests fast, you don't want to delete and recreate the database schema for every test. Instead, 'respawn' your data.

How it works: It uses smart SQL commands (TRUNCATE or DELETE) to clear only the data in your tables while keeping the schema intact. It is significantly faster than running migrations or dropping the DB.

4. Shared vs. Fresh Databases

| Approach | Speed | Isolation | Best For |

|---|---|---|---|

| One DB per Test | Slow | Perfect | Parallel tests that modify global settings. |

| Shared DB (Respawn) | Fast | Careful | Standard CRUD and business logic tests. |

| Static Reference Data | Instant | High | Things that never change (Countries, Currencies). |

5. Efficient Seeding Techniques

- Batching: If you need to seed 100 records, use

AddRange()and a singleSaveChangesAsync()call to minimize round-trips to the database. - Respawn Checkpointing: Respawn can "checkpoint" a base state. You can seed your static lookup data once, checkpoint it, and then only reset the transactional data (Orders, Users) between tests.

- Unique IDs: Always use unique values for names or emails (e.g.,

$"test-{Guid.NewGuid()}@example.com") to prevent collision if tests run in parallel.

What .NET engineers should know

- 👼 Junior: Seed minimal data per test and keep it deterministic.

- 🎓 Middle: Use builders/factories and isolate tests with transactions or fresh databases.

- 👑 Senior: Standardize seeding and isolation strategy to keep integration tests fast and maintainable.

❓ How do you isolate the state between tests?

Isolating the state is the hardest part of integration testing. If tests leak data into one another, you get "flaky" results where tests pass individually but fail when run in a different order.

Here are the three primary strategies for .NET, ranked from fastest to most isolated:

The Transactional Rollback

The test opens a transaction at the start, performs all operations, and then rolls it back instead of committing it.

- How: In EF Core, you use

_context.Database.BeginTransaction(). - Pros: Extremely fast; the database never actually changes.

- Cons: Doesn't work if your code handles its own transactions (nested transactions can be tricky) or if you are testing multi-threaded scenarios where different connections need to see the data.

2. The "Clean Slate" (Respawn)

You share a single database across the entire run, but you nuke the data between every test.

- How: Use the Respawn. It checkpoints the database and uses

TRUNCATEorDELETEcommands to reset tables to a blank state in milliseconds. - Pros: High fidelity; works with real multi-connection scenarios.

- Cons: You cannot run tests in parallel against the same database instance.

3. Database-per-Test / Schema-per-Test (Most Isolated)

Every test gets its own completely isolated sandbox.

- Schema-per-test: You create a unique schema name (e.g.,

test_guid_1) for each test within the same database instance. - Database-per-test: You spin up a fresh Docker container for every test using Testcontainers.

- Pros: Provides perfect isolation; enables true parallel execution.

- Cons: Slower. Spinning up a container or running migrations 500 times adds significant overhead to your CI/CD pipeline.

The Recommended Hybrid Approach

Most high-performing .NET teams use this combination:

- Shared Container: Spin up one database container for the entire test collection (Database-per-run).

- Respawn Reset: Use Respawn to clear data between each test.

- Unique Data: Use

Guidor unique prefixes for entities to prevent rare collisions.

What .NET engineers should know

- 👼 Junior: Each test must run independently with no shared state.

- 🎓 Middle: Know tradeoffs of transactions vs reset vs per-test DB and choose based on speed and realism.

- 👑 Senior: Standardize isolation strategy, ensure parallel safety, and prevent leaks from background processing.

❓ How do you use Testcontainers.NET for SQL Server/Postgres/Redis/Kafka in tests?

To use Testcontainers for .NET, you leverage the Docker API to spin up real infrastructure instances as part of your test lifecycle. It replaces "In-Memory" fakes with actual binaries running in lightweight containers.

Setup:

You generally define your containers in an xUnit IAsyncLifetime or a shared CollectionFixture so they start before the tests and dispose of themselves afterward.

public class DatabaseFixture : IAsyncLifetime

{

// Define the specific container

private readonly PostgreSqlContainer _dbContainer = new PostgreSqlBuilder()

.WithImage("postgres:15-alpine")

.WithDatabase("testdb")

.WithUsername("admin")

.WithPassword("password")

.Build();

public async Task InitializeAsync() => await _dbContainer.StartAsync();

public async Task DisposeAsync() => await _dbContainer.DisposeAsync();

// Use this to configure your DbContext

public string ConnectionString => _dbContainer.GetConnectionString();

}To run a full API integration test, you inject the container's connection string into your SUT (System Under Test) during the host building phase.

public class MyApiTests : IClassFixture<DatabaseFixture>

{

private readonly DatabaseFixture _fixture;

public MyApiTests(DatabaseFixture fixture) => _fixture = fixture;

[Fact]

public async Task GetUser_ReturnsSuccess()

{

var factory = new WebApplicationFactory<Program>()

.WithWebHostBuilder(builder =>

{

builder.ConfigureServices(services =>

{

// Overwrite the real DB with the container connection string

services.AddDbContext<MyDbContext>(options =>

options.UseNpgsql(_fixture.ConnectionString));

});

});

var client = factory.CreateClient();

// ... execute test

}

}

Recommendation

- If your test suite is large, spinning up a container for every test class is slow. You can use the Singleton Pattern or xUnit Collection Fixtures to share one container instance across multiple test files, using a "Respawn" to clear the data between individual tests.

- Seed minimal data per test, not a huge baseline dataset.

- Use timeouts as safety nets so tests never hang.

What .NET engineers should know

- 👼 Junior: Use Testcontainers to run real dependencies and keep tests isolated.

- 🎓 Middle: Reuse containers with fixtures, apply migrations once, seed minimal data.

- 👑 Senior: Standardize container lifecycle, speed strategy, and failure diagnostics across the repo.

📚 Resources

❓ What are the challenges of running test containers on Windows agents?

- Most official images used in tests are Linux-based. On Windows agents, you either need Linux containers support or you are forced into Windows container images, which are fewer, heavier, and sometimes behave differently.

- Many Windows CI agents run Windows Server without Docker Desktop. You rely on the Docker Engine setup, which can vary widely across runners. Features like Linux containers via WSL2 are typically not available on Windows Server agents.

- Windows containers usually start more slowly and consume more resources. CI Windows runners are often slower on disk IO, too. This increases flakiness if timeouts are tight.

- Port mapping and DNS can be less predictable, especially with NAT networking. “localhost” assumptions break more often. Always use mapped ports and connection strings from Testcontainers.

- Volume mounts and path handling differ (C:\ paths, permissions, file locking). Some images and tooling assume POSIX paths. Windows file locks can cause cleanup issues.

- Windows agents can experience memory pressure more quickly with multiple containers. Parallel test runs are more likely to cause timeouts.

- For Windows containers, image tags are tied to Windows build numbers. You can get “image not compatible with this host” errors if the host build and image build do not match.

- Capturing logs and doing quick “exec into container” is still possible, but scripts used in Linux pipelines often do not work as-is on Windows (shell, path, quoting).

What .NET engineers should know

- 👼 Junior: Windows agents often struggle because most test images are Linux-based, and startup is slower.

- 🎓 Middle: Plan for container mode, networking differences, and slower performance, and tune timeouts and parallelism.

- 👑 Senior: Separate pipelines so container integration tests run on Linux agents, keep Windows agents for Windows-specific value.

HTTP / REST API testing

❓ How do you test file upload/download endpoints?

File upload and download endpoints have a different failure surface than JSON APIs — content-type negotiation, multipart boundary parsing, stream handling, size limits, and storage backend integration all sit outside what unit tests reach. The meaningful tests drive real HTTP through a test host with real file content and assert on both the HTTP contract and the storage side effect.

Upload testing means constructing a real MultipartFormDataContent request with actual file bytes and sending it through WebApplicationFactory. The test asserts on the HTTP response, then queries the storage backend directly to verify the file was persisted correctly — name, size, content type, and content. Asserting only on the HTTP response misses the case where the endpoint returns 200 but writes corrupt or empty content to storage.

[Fact]

public async Task UploadEndpoint_StoresFile_WithCorrectMetadata()

{

// Arrange — real file content, not empty bytes

var fileContent = "order-id,product-id,quantity\n1,SKU-001,2"u8.ToArray();

using var content = new MultipartFormDataContent();

content.Add(

new ByteArrayContent(fileContent) { Headers = { ContentType = new("text/csv") } },

name: "file",

fileName: "orders.csv");

// Act — drive through real test host

var response = await _client.PostAsync("/api/files/upload", content);

Assert.Equal(HttpStatusCode.Created, response.StatusCode);

var result = await response.Content.ReadFromJsonAsync<UploadResponse>();

// Assert storage side effect — not just HTTP response

var stored = await _storageClient.GetAsync(result!.FileId);

Assert.Equal("orders.csv", stored.FileName);

Assert.Equal("text/csv", stored.ContentType);

Assert.Equal(fileContent.Length, stored.SizeBytes);

Assert.Equal(fileContent, stored.Content);

}Download testing requires asserting on the full HTTP response contract — status code, Content-Type header, Content-Disposition header, and the actual byte content of the response body. A common gap is testing that the file downloads correctly but not verifying the Content-Disposition filename, which controls how browsers name the saved file. Use response.Content.ReadAsByteArrayAsync() and compare against the known source bytes.

[Fact]

public async Task DownloadEndpoint_ReturnsCorrectFile_WithHeaders()

{

// Arrange — seed a known file into storage

var fileId = await _storageClient.StoreAsync("orders.csv", "text/csv",

"order-id,product-id\n1,SKU-001"u8.ToArray());

// Act

var response = await _client.GetAsync($"/api/files/{fileId}/download");

// Assert HTTP contract

Assert.Equal(HttpStatusCode.OK, response.StatusCode);

Assert.Equal("text/csv", response.Content.Headers.ContentType?.MediaType);

Assert.Equal("attachment; filename=\"orders.csv\"",

response.Content.Headers.ContentDisposition?.ToString());

// Assert content integrity — byte-for-byte match

var bytes = await response.Content.ReadAsByteArrayAsync();

Assert.Equal("order-id,product-id\n1,SKU-001"u8.ToArray(), bytes);

}What .NET engineers should know:

- 👼 Junior: Test both the HTTP response and the storage side effect — a 200 response that writes empty bytes to storage is a passing test covering a real bug.

- 🎓 Middle: Assert the full download response contract, including Content-Type and Content-Disposition headers; test size limits, empty files, and rejected content types as explicit cases rather than relying on framework defaults to behave correctly.

- 👑 Senior: Use Azurite or LocalStack via Testcontainers instead of mocking storage interfaces — real SDK behavior around streams, retry policies, and content negotiation is exactly what file endpoint tests need to cover; treat content integrity (byte-for-byte round-trip verification) as a mandatory assertion for any upload/download flow.

❓ How do you test ASP.NET Core endpoints without duplicating implementation logic in tests?

The recommended approach is to use WebApplicationFactory<T> from the Microsoft.AspNetCore.Mvc.Testing package. It spins up your real application in-memory — with the actual middleware pipeline, routing, dependency injection, and configuration — and exposes an HttpClient to make requests against it. This means you are testing the full HTTP contract of your endpoint (status codes, response bodies, headers) without copying or re-implementing any of the logic that lives inside it.

This approach sits between a unit test and a full E2E test, often called an integration test or functional test, and is the standard way to test ASP.NET Core controllers, minimal API endpoints, middleware, and filters together as a cohesive unit.

Code example

minimal API:

app.MapGet("/products/{id}", async (int id, IProductService service) =>

{

var product = await service.GetByIdAsync(id);

return product is null ? Results.NotFound() : Results.Ok(product);

});test:

public class ProductsEndpointTests : IClassFixture<WebApplicationFactory<Program>>

{

private readonly HttpClient _client;

public ProductsEndpointTests(WebApplicationFactory<Program> factory)

{

_client = factory.CreateClient();

}

[Fact]

public async Task GetProduct_WhenExists_ReturnsOkWithProduct()

{

var response = await _client.GetAsync("/products/1");

response.EnsureSuccessStatusCode();

var product = await response.Content.ReadFromJsonAsync<ProductDto>();

Assert.NotNull(product);

Assert.Equal(1, product.Id);

}

[Fact]

public async Task GetProduct_WhenNotFound_Returns404()

{

var response = await _client.GetAsync("/products/9999");

Assert.Equal(HttpStatusCode.NotFound, response.StatusCode);

}

}When you need to replace real infrastructure with test doubles, use WithWebHostBuilder to swap out specific DI registrations:

var factory = factory.WithWebHostBuilder(builder =>

{

builder.ConfigureServices(services =>

{

services.RemoveAll<IProductService>();

services.AddScoped<IProductService, FakeProductService>();

});

});What .NET engineers should know:

- 👼 Junior: Should understand that

WebApplicationFactory<T>starts the real app in-memory, and thatCreateClient()returns anHttpClientyou can use it to call endpoints. Know how to write a basic test that checks a status code and response body. - 🎓 Middle: Expected to customize the factory with

WithWebHostBuilderto replace database contexts or external services with test doubles. Should know how to configure test-specific settings, seed a test database using EF Core with an in-memory or SQLite provider, and share the factory across tests usingIClassFixture<T>to avoid restarting the app for every test. - 👑 Senior: Should design a reusable

CustomWebApplicationFactorybase class shared across the test suite, handling concerns like database seeding, authentication bypass, and time abstraction. Understands the trade-offs between testing at the HTTP layer and testing services directly, and can integrate this approach into CI pipelines, ensuring tests remain fast and deterministic by using transactions or database resets between runs.

📚 Resources: Integration tests in ASP.NET Core

❓ How do you test authentication and authorization policies reliably?

Testing authentication and authorization in ASP.NET Core is best done at two levels. First, you test the policy logic itself in isolation — verifying that a given policy behaves correctly for specific claims, roles, or requirements. Second, you test the full HTTP behavior — verifying that protected endpoints return 401 Unauthorized or 403 Forbidden for the right scenarios. Mixing both levels into a single approach results in brittle tests that are hard to diagnose when they fail.

For HTTP-level tests using WebApplicationFactory<T>, ASP.NET Core provides AddAuthentication test helpers that let you inject a fake authenticated user with any claims you need, without dealing with real tokens or cookies.

Example:

Custom test auth handler:

public class TestAuthHandler : AuthenticationHandler<AuthenticationSchemeOptions>

{

public TestAuthHandler(IOptionsMonitor<AuthenticationSchemeOptions> options,

ILoggerFactory logger, UrlEncoder encoder)

: base(options, logger, encoder) { }

protected override Task<AuthenticateResult> HandleAuthenticateAsync()

{

var claims = new[]

{

new Claim(ClaimTypes.Name, "testuser"),

new Claim(ClaimTypes.Role, "Admin")

};

var identity = new ClaimsIdentity(claims, "Test");

var principal = new ClaimsPrincipal(identity);

var ticket = new AuthenticationTicket(principal, "Test");

return Task.FromResult(AuthenticateResult.Success(ticket));

}

}Wiring it into the factory:

var factory = factory.WithWebHostBuilder(builder =>

{

builder.ConfigureServices(services =>

{

services.AddAuthentication("Test")

.AddScheme<AuthenticationSchemeOptions, TestAuthHandler>("Test", _ => { });

});

});Testing authorization policies in isolation:

[Fact]

public async Task AdminPolicy_ShouldFail_WhenUserHasNoAdminRole()

{

// Arrange

var authService = _serviceProvider.GetRequiredService<IAuthorizationService>();

var user = new ClaimsPrincipal(new ClaimsIdentity(new[]

{

new Claim(ClaimTypes.Name, "regularuser")

}, "Test"));

// Act

var result = await authService.AuthorizeAsync(user, null, "AdminPolicy");

// Assert

Assert.False(result.Succeeded);

}Testing that an endpoint returns 401 for unauthenticated requests:

[Fact]

public async Task SecureEndpoint_WithoutAuth_Returns401()

{

var client = factory.CreateClient(new WebApplicationFactoryClientOptions

{

AllowAutoRedirect = false

});

var response = await client.GetAsync("/admin/dashboard");

Assert.Equal(HttpStatusCode.Unauthorized, response.StatusCode);

}

[Fact]

public async Task SecureEndpoint_WithWrongRole_Returns403()

{

// client configured with TestAuthHandler that has no Admin role

var response = await _client.GetAsync("/admin/dashboard");

Assert.Equal(HttpStatusCode.Forbidden, response.StatusCode);

}What .NET engineers should know:

- 👼 Junior: Should understand the difference between

401 Unauthorized(not authenticated — no valid identity) and403 Forbidden(authenticated but not authorized — wrong role or policy). Know how to disable authentication in tests usingAllowAnonymousor a fake scheme to isolate endpoint logic from auth concerns. - 🎓 Middle: Expected to implement a

TestAuthHandlerto inject specific users with chosen claims and roles into integration tests. Should know how to test both the happy path (authorized user gets 200) and the failure paths (unauthenticated gets 401, wrong role gets 403). Should also know how to testIAuthorizationServicepolicy logic directly in unit tests without spinning up the full HTTP stack. - 👑 Senior: Should design a flexible test infrastructure that makes it easy to run any integration test as different user personas (anonymous, regular user, admin, etc.) without duplicating setup code. Understands how to test custom

IAuthorizationRequirementandIAuthorizationHandlerimplementations in isolation, how to test resource-based authorization, and how to avoid tests that pass because auth is accidentally disabled rather than properly configured.

📚 Resources:

❓ How do you test middleware behavior?

Middleware testing in ASP.NET Core can be approached at two levels. The first is testing the middleware in complete isolation using TestServer or a minimal RequestDelegate pipeline — useful when the middleware has no dependency on the rest of the application. The second is testing it through WebApplicationFactory<T> as part of the full pipeline — useful when you want to verify that the middleware integrates correctly with routing, exception handling, and other middleware in the chain.

For most real-world scenarios, the WebApplicationFactory<T> The approach is preferred because it tests the middleware exactly as it runs in production, including its registration order in the pipeline.

Code example

The middleware under test (correlation ID):

public class CorrelationIdMiddleware

{

private const string CorrelationIdHeader = "X-Correlation-ID";

private readonly RequestDelegate _next;

public CorrelationIdMiddleware(RequestDelegate next) => _next = next;

public async Task InvokeAsync(HttpContext context)

{

if (!context.Request.Headers.TryGetValue(CorrelationIdHeader, out var correlationId))

{

correlationId = Guid.NewGuid().ToString();

}

context.Response.Headers[CorrelationIdHeader] = correlationId.ToString();

await _next(context);

}

}Testing middleware in isolation using a minimal pipeline:

[Fact]

public async Task CorrelationIdMiddleware_WhenNoHeaderProvided_GeneratesNewCorrelationId()

{

// Arrange

var builder = WebApplication.CreateBuilder();

await using var app = builder.Build();

app.Use(async (context, next) =>

{

var middleware = new CorrelationIdMiddleware(next.Invoke);

await middleware.InvokeAsync(context);

});

app.MapGet("/test", () => Results.Ok());

var testServer = new TestServer(app);

var client = testServer.CreateClient();

// Act

var response = await client.GetAsync("/test");

// Assert

Assert.True(response.Headers.Contains("X-Correlation-ID"));

Assert.NotEmpty(response.Headers.GetValues("X-Correlation-ID").First());

}

[Fact]

public async Task CorrelationIdMiddleware_WhenHeaderProvided_PropagatesExistingId()

{

var expectedId = "my-correlation-id-123";

var request = new HttpRequestMessage(HttpMethod.Get, "/test");

request.Headers.Add("X-Correlation-ID", expectedId);

var response = await _client.SendAsync(request);

Assert.Equal(expectedId, response.Headers.GetValues("X-Correlation-ID").First());

}Testing error mapping middleware (ProblemDetails):

[Fact]

public async Task ErrorMiddleware_WhenUnhandledExceptionOccurs_ReturnsProblemDetails()

{

// Arrange — factory configured to throw from a test endpoint

var factory = _factory.WithWebHostBuilder(builder =>

{

builder.ConfigureServices(services =>

{

services.AddProblemDetails();

});

builder.Configure(app =>

{

app.UseExceptionHandler();

app.MapGet("/error-test", () =>

{

throw new InvalidOperationException("Something went wrong");

});

});

});

var client = factory.CreateClient();

// Act

var response = await client.GetAsync("/error-test");

// Assert

Assert.Equal(HttpStatusCode.InternalServerError, response.StatusCode);

Assert.Equal("application/problem+json", response.Content.Headers.ContentType?.MediaType);

var problem = await response.Content.ReadFromJsonAsync<ProblemDetails>();

Assert.NotNull(problem);

Assert.Equal(500, problem.Status);

}Testing response headers middleware:

[Fact]

public async Task SecurityHeadersMiddleware_AddsExpectedHeaders()

{

var response = await _client.GetAsync("/");

Assert.Equal("nosniff",

response.Headers.GetValues("X-Content-Type-Options").First());

Assert.Equal("DENY",

response.Headers.GetValues("X-Frame-Options").First());

Assert.Equal("max-age=31536000",

response.Headers.GetValues("Strict-Transport-Security").First());

}What .NET engineers should know:

- 👼 Junior: Should understand that middleware can be tested through the HTTP layer using

WebApplicationFactory<T>by checking response headers, status codes, and response bodies. Know how to inspectresponse.Headersin a test and understand what correlation IDs are used for — tracing a single request across multiple services or log entries. - 🎓 Middle: Expected to test middleware both in isolation (using a minimal

RequestDelegatepipeline orTestServer) and as part of the full application pipeline. Should know how to set up test endpoints specifically designed to trigger certain middleware behavior (e.g., an endpoint that throws to test error mapping, or one that returns a specific status code to test response transformation). Should understandProblemDetailsand how to assert on its structure in tests. - 👑 Senior: Should design middleware that is inherently easy to test — stateless, with dependencies injected rather than resolved directly from

HttpContext.RequestServices. Understands the ordering implications of middleware registration and writes tests that verify middleware executes in the correct sequence. Can test middleware that short-circuits the pipeline (e.g., rate limiters, auth middleware) and verify it does not call_nextunder specific conditions. Integrates middleware tests into the CI pipeline to catch regressions across cross-cutting concerns, such as security headers and error response formats.

📚 Resources:

❓ How do you test versioning behavior (URL/header/query) and deprecation responses?

API versioning has three failure modes worth testing: the wrong version resolves to the wrong handler, an unsupported version returns the wrong status code, and a deprecated version stops returning deprecation signals. None of these are caught by testing a single version in isolation — versioning tests must explicitly exercise the routing and negotiation layer.

For each versioning strategy — URL segment (/v1/orders), header (api-version: 1.0), query string (?api-version=1.0) — write a test that sends the version identifier and asserts the correct handler responded. The fastest way to verify this is to include a version discriminator in the response body or a custom header, so the test does not have to infer which handler ran from the response shape alone.

[Theory]

[InlineData("/api/v1/orders", null, null)] // URL versioning

[InlineData("/api/orders", "api-version: 1.0", null)] // header versioning

[InlineData("/api/orders?api-version=1.0", null, "1.0")] // query versioning

public async Task VersionRouting_ResolvesCorrectHandler(

string path, string? header, string? query)

{

var request = new HttpRequestMessage(HttpMethod.Get, path);

if (header != null) request.Headers.Add("api-version", "1.0");

var response = await _client.SendAsync(request);

Assert.Equal(HttpStatusCode.OK, response.StatusCode);

Assert.Equal("1.0", response.Headers.GetValues("api-supported-versions").First());

}Deprecation responses need two explicit tests: that the deprecated version still works (returns 200) and that it signals deprecation correctly via the Sunset header (RFC 8594) or Deprecation header. A deprecated version that silently returns 200 with no signal gives consumers no reason to migrate.

[Fact]

public async Task DeprecatedVersion_Returns200_WithSunsetHeader()

{

var response = await _client.GetAsync("/api/v1/orders");

Assert.Equal(HttpStatusCode.OK, response.StatusCode);

Assert.True(response.Headers.Contains("Sunset"),

"Deprecated version must include Sunset header");

}

[Fact]

public async Task UnsupportedVersion_Returns400_WithProblemDetails()

{

var response = await _client.GetAsync("/api/v99/orders");

Assert.Equal(HttpStatusCode.BadRequest, response.StatusCode);

var body = await response.Content.ReadFromJsonAsync<ProblemDetails>();

Assert.Contains("unsupported", body!.Detail, StringComparison.OrdinalIgnoreCase);

}What .NET engineers should know:

- 👼 Junior: Test each versioning strategy explicitly — do not assume URL, header, and query routing all work because one of them does.

- 🎓 Middle: Assert deprecation headers on deprecated versions and verify unsupported versions return structured error responses, not 404s or unhandled exceptions.

- 👑 Senior: Treat versioning as a contract test — each version's response shape, deprecation signals, and sunset dates are commitments to consumers; version contract tests should run in CI and fail when a version's behavior changes unexpectedly.

📚 Resources: The Sunset HTTP Header Field

gRPC API testing

❓ How do you integration-test a gRPC service in .NET without going “full E2E”?

gRPC services in .NET can be integration-tested in-memory using the same WebApplicationFactory<T> approach used for REST APIs, combined with a gRPC channel configured to communicate over the in-memory test server rather than a real network socket. This gives you the full gRPC pipeline — interceptors, authentication, serialization, and service logic — without deploying anything or opening real ports.

The key is using GrpcChannel.ForAddress with a custom HttpHandler that routes requests through the WebApplicationFactory's internal HttpClient. This is provided by the Grpc.AspNetCore and Grpc.Net.Client packages, and the Microsoft.AspNetCore.Mvc.Testing package ties it all together.

Example:

The gRPC service under test:

public class GreeterService : Greeter.GreeterBase

{

private readonly IGreetingService _greetingService;

public GreeterService(IGreetingService greetingService)

{

_greetingService = greetingService;

}

public override async Task<HelloReply> SayHello(

HelloRequest request, ServerCallContext context)

{

var message = await _greetingService.BuildGreetingAsync(request.Name);

return new HelloReply { Message = message };

}

}Setting up the in-memory gRPC channel:

public class GrpcIntegrationTests : IClassFixture<WebApplicationFactory<Program>>

{

private readonly WebApplicationFactory<Program> _factory;

public GrpcIntegrationTests(WebApplicationFactory<Program> factory)

{

_factory = factory;

}

private GrpcChannel CreateChannel()

{

// Route the gRPC channel through the in-memory test server

var handler = factory.Server.CreateHandler();

return GrpcChannel.ForAddress("http://localhost", new GrpcChannelOptions

{

HttpHandler = handler

});

}

}Testing a unary RPC call:

[Fact]

public async Task SayHello_WithValidName_ReturnsGreeting()

{

// Arrange

var channel = CreateChannel();

var client = new Greeter.GreeterClient(channel);

// Act

var reply = await client.SayHelloAsync(new HelloRequest { Name = "Alice" });

// Assert

Assert.Equal("Hello, Alice!", reply.Message);

}

[Fact]

public async Task SayHello_WithEmptyName_ThrowsRpcException()

{

var channel = CreateChannel();

var client = new Greeter.GreeterClient(channel);

var ex = await Assert.ThrowsAsync<RpcException>(() =>

client.SayHelloAsync(new HelloRequest { Name = "" }).ResponseAsync);

Assert.Equal(StatusCode.InvalidArgument, ex.StatusCode);

}Testing a server-streaming RPC:

[Fact]

public async Task GetUpdates_StreamsExpectedMessages()

{

var channel = CreateChannel();

var client = new UpdateService.UpdateServiceClient(channel);

var streamingCall = client.GetUpdates(new UpdateRequest { Topic = "news" });

var messages = new List<UpdateReply>();

await foreach (var message in streamingCall.ResponseStream.ReadAllAsync())

{

messages.Add(message);

}

Assert.NotEmpty(messages);

Assert.All(messages, m => Assert.Equal("news", m.Topic));

}Replacing dependencies in the factory for controlled scenarios:

private GrpcChannel CreateChannelWithFakes()

{

var factory = _factory.WithWebHostBuilder(builder =>

{

builder.ConfigureServices(services =>

{

services.RemoveAll<IGreetingService>();

services.AddScoped<IGreetingService, FakeGreetingService>();

});

});

var httpClient = factory.CreateClient();

return GrpcChannel.ForAddress(httpClient.BaseAddress!, new GrpcChannelOptions

{

HttpClient = httpClient

});

}Testing gRPC interceptors (e.g., logging or auth):

[Fact]

public async Task AuthInterceptor_WithoutMetadata_ReturnsUnauthenticated()

{

var channel = CreateChannel(); // no auth headers injected

var client = new SecureService.SecureServiceClient(channel);

var ex = await Assert.ThrowsAsync<RpcException>(() =>

client.GetSecretAsync(new SecretRequest()).ResponseAsync);

Assert.Equal(StatusCode.Unauthenticated, ex.StatusCode);

}

[Fact]

public async Task AuthInterceptor_WithValidToken_ReturnsOk()

{

var channel = CreateChannel();

var headers = new Metadata { { "Authorization", "Bearer valid-test-token" } };

var client = new SecureService.SecureServiceClient(channel);

var reply = await client.GetSecretAsync(new SecretRequest(), headers);

Assert.NotNull(reply);

}What .NET engineers should know:

- 👼 Junior: Should understand that gRPC services can be tested in-memory without a real server or network by routing a

GrpcChannelthrough theWebApplicationFactory'sHttpClient. Know how to create a typed gRPC client from the channel and make basic unary calls. Should understand thatRpcExceptionis the gRPC equivalent of an HTTP error response and know how to assert on itsStatusCode. - 🎓 Middle: Expected to set up the in-memory gRPC channel correctly, including configuring

GrpcChannelOptionswith the testHttpClient. Should know how to test all four gRPC communication patterns — unary, server streaming, client streaming, and bidirectional streaming — and how to useWithWebHostBuilderto replace infrastructure dependencies with fakes for controlled test scenarios. Should also know how to pass metadata headers in test calls to simulate authentication or tracing. - 👑 Senior: Should design a shared test base class or fixture that encapsulates channel creation, fake registration, and authentication setup so individual tests stay focused on behavior rather than infrastructure. Understands how to test gRPC interceptors in isolation by registering them explicitly in a minimal pipeline and verifying they correctly modify request/response metadata or short-circuit calls. Can identify when in-memory tests are sufficient versus when a real network test against a containerized service (e.g., using Testcontainers) is necessary — for example, when testing TLS configuration or HTTP/2 framing behavior that the in-memory transport abstracts away.

📚 Resources:

❓ How do you test gRPC status codes and error details?

In gRPC, errors are not HTTP status codes — they are RpcException instances carrying a StatusCode enum value and an optional detail message. Testing them means asserting that the right StatusCode is thrown for the right scenario, and optionally that the Status.Detail message contains meaningful information for the caller.

The most important status codes to cover in tests are OK (implicit on success), NotFound, InvalidArgument, AlreadyExists, PermissionDenied, and Internal. Each maps to a specific failure scenario in your service logic.

Code example:

[Fact]

public async Task GetProduct_WhenNotFound_ThrowsNotFoundStatus()

{

var client = new ProductService.ProductServiceClient(CreateChannel());

var ex = await Assert.ThrowsAsync<RpcException>(() =>

client.GetProductAsync(new GetProductRequest { Id = 999 }).ResponseAsync);

Assert.Equal(StatusCode.NotFound, ex.StatusCode);

Assert.Contains("999", ex.Status.Detail);

}

[Fact]

public async Task CreateProduct_WithEmptyName_ThrowsInvalidArgument()

{

var client = new ProductService.ProductServiceClient(CreateChannel());

var ex = await Assert.ThrowsAsync<RpcException>(() =>

client.CreateProductAsync(new CreateProductRequest { Name = "" }).ResponseAsync);

Assert.Equal(StatusCode.InvalidArgument, ex.StatusCode);

}What .NET engineers should know:

- 👼 Junior: Should know that gRPC errors surface as

RpcExceptionand thatex.StatusCodeis how you assert on the error type. Understand the most common status codes —NotFound,InvalidArgument,Unauthenticated,PermissionDenied, andInternal— and what scenario each represents. - 🎓 Middle: Expected to assert on both

StatusCodeandStatus.Detailto verify the error message is meaningful and not leaking internal implementation details. Should know how to throw the correctRpcExceptionfrom service code usingnew RpcException(new Status(StatusCode.NotFound, "Product 999 not found"))and test that contract explicitly. - 👑 Senior: Should enforce a consistent error mapping strategy across all gRPC services — typically using an interceptor that catches domain exceptions and maps them to the appropriate

StatusCode— and write tests that verify the interceptor mapping rather than testing error throwing in every individual service method. Can also leveragegoogle.rpc.Statusrich error details (viaGrpc.StatusProto) for structured error payloads when clients need machine-readable error information beyond a status code and message string.

📚 Resources: Error handling

❓ How do you validate metadata/headers in gRPC calls?

In gRPC, HTTP headers are called metadata — key/value pairs sent alongside a request via the Metadata class. Testing metadata validation means verifying two things: that your service correctly rejects calls with missing or invalid metadata, and that your interceptors or middleware correctly read, propagate, or enrich metadata before it reaches service logic.

The cleanest way to test this is through the in-memory channel approach — passing metadata explicitly in test calls and asserting on the resulting RpcException status code or response behavior.

Code example:

[Fact]

public async Task Call_WithoutAuthToken_ReturnsUnauthenticated()

{

var client = new OrderService.OrderServiceClient(CreateChannel());

var ex = await Assert.ThrowsAsync<RpcException>(() =>

client.GetOrderAsync(new GetOrderRequest { Id = 1 }).ResponseAsync);

Assert.Equal(StatusCode.Unauthenticated, ex.StatusCode);

}

[Fact]

public async Task Call_WithValidToken_PropagatesCorrelationId()

{

var client = new OrderService.OrderServiceClient(CreateChannel());

var headers = new Metadata

{

{ "Authorization", "Bearer valid-test-token" },

{ "X-Correlation-ID", "test-correlation-123" }

};

var reply = await client.GetOrderAsync(new GetOrderRequest { Id = 1 }, headers);

Assert.NotNull(reply);

}What .NET engineers should know:

- 👼 Junior: Should know that gRPC metadata is the equivalent of HTTP headers and is passed as a

Metadataobject in each client call. Understand how to add entries toMetadataand pass them as the second argument to a gRPC client method. Know that missing auth metadata typically results inStatusCode.Unauthenticated. - 🎓 Middle: Expected to test interceptors that read and validate metadata in isolation — injecting a fake

ServerCallContextor using the in-memory pipeline to verify the interceptor short-circuits correctly when required metadata is absent or malformed. Should know how to test that correlation IDs are read from incoming metadata and written to outgoing response metadata or propagated to downstream calls viaIHttpContextAccessor. - 👑 Senior: Should design a metadata validation interceptor that centralizes header concerns — auth token extraction, correlation ID propagation, tenant resolution — and write focused tests against that interceptor rather than duplicating metadata assertions across every service test. Understands how to use

CallCredentialsfor token-based auth in production gRPC clients and how to stub that in tests cleanly without coupling tests to the token generation mechanism.

❓ How do you test deadlines and cancellation in gRPC?

gRPC has first-class support for two related but distinct cancellation concepts. A deadline is set by the client and tells the server "complete this call before this point in time or I will stop waiting." A cancellation is an explicit signal — either from the client abandoning the call or from the server deciding to stop processing. Both surface as RpcException with StatusCode.DeadlineExceeded or StatusCode.Cancelled respectively.

Testing these reliably requires either controlling time or introducing deliberate server-side delays so you can trigger the condition without slowing down or making tests flaky.

Example:

[Fact]

public async Task Call_WhenDeadlineExceeded_ThrowsDeadlineExceeded()

{

var client = new ReportService.ReportServiceClient(CreateChannel());

var ex = await Assert.ThrowsAsync<RpcException>(() =>

client.GenerateReportAsync(

new ReportRequest { Type = "heavy" },

deadline: DateTime.UtcNow.AddMilliseconds(1) // extremely tight deadline

).ResponseAsync);

Assert.Equal(StatusCode.DeadlineExceeded, ex.StatusCode);

}

[Fact]

public async Task Call_WhenClientCancels_ThrowsCancelled()

{

var client = new ReportService.ReportServiceClient(CreateChannel());

using var cts = new CancellationTokenSource();

var call = client.GenerateReportAsync(

new ReportRequest { Type = "heavy" },

cancellationToken: cts.Token);

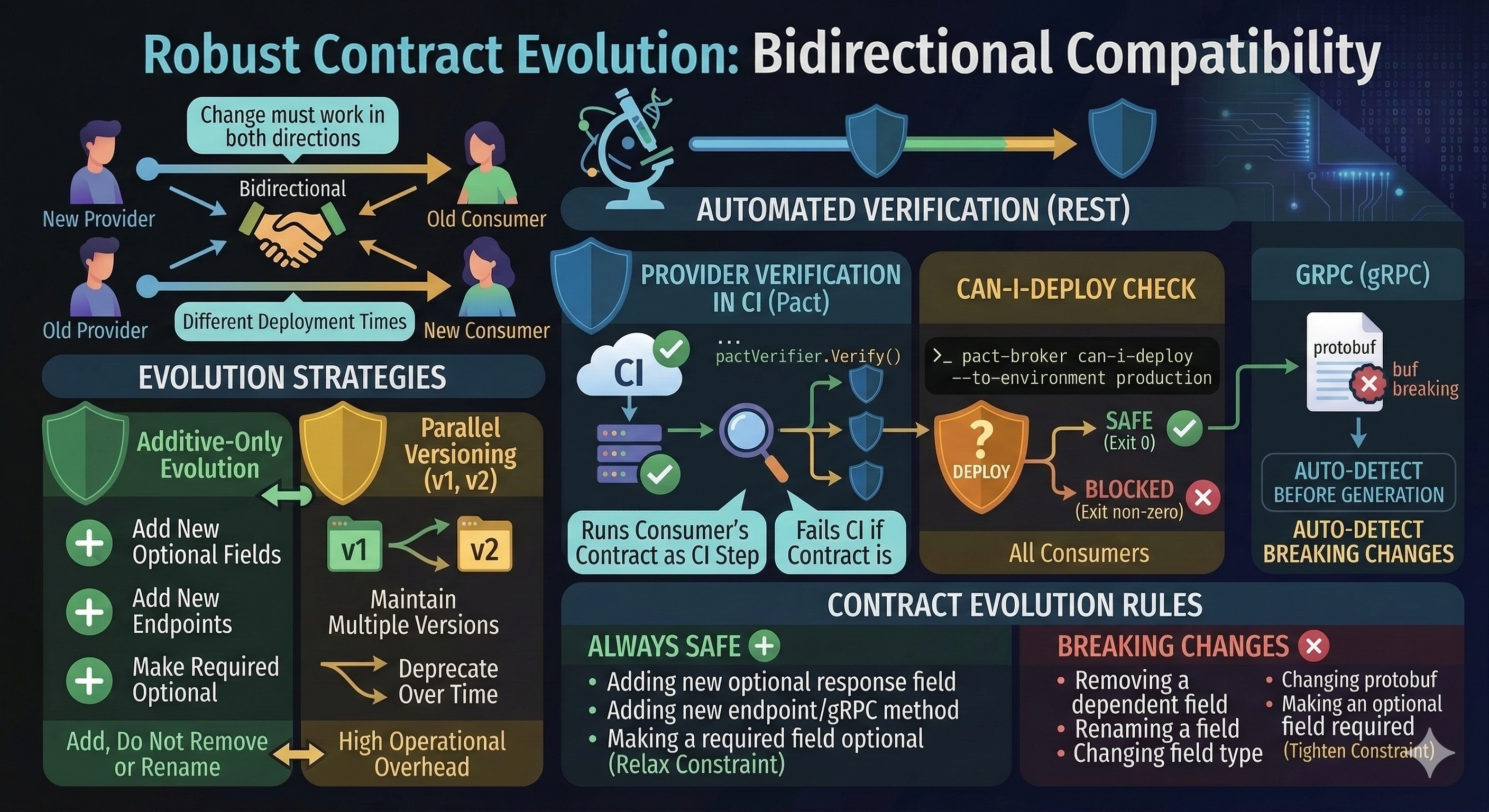

cts.Cancel(); // cancel before server responds